NPTEL Deep Learning Week 2 Assignment Answers 2025

1. Suppose if you are solving an n-class problem, how many discriminant function you will need

for solving?

a. n-1

b. n

C. n+1

d. n-2

Answer :- For Answers Click Here

2. If we choose the discriminant function gi(x) as a function of posterior probability. i.e. gi (x) =

f (p(wi/x)). Then which of following cannot be the function f ()?

a. f(x) = ax, where a > 1

b. f(x) = a-x, where a > 1

c. f(x) = 2x + 3

d. f(x) = exp(x)

Answer :-

3. What will be the nature of decision surface when the covariance matrices of different classes

are identical but otherwise arbitrary? Given all the classes has equal class probabilities)

a. Always orthogonal to two surfaces

b. Generally not orthogonal to two surfaces

c. Bisector of the line joining two mean, but not always orthogonal to two surface.

d. Arbitrary

Answer :-

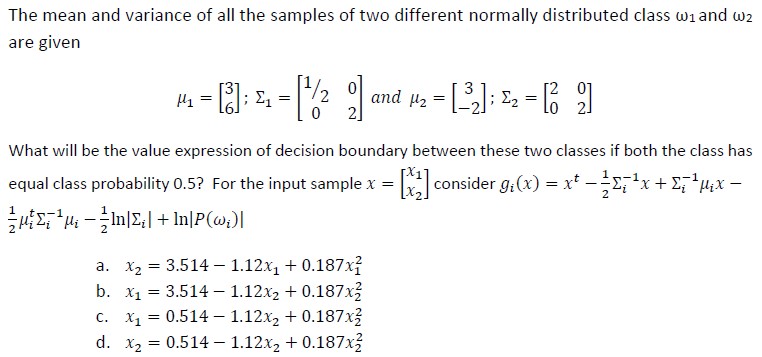

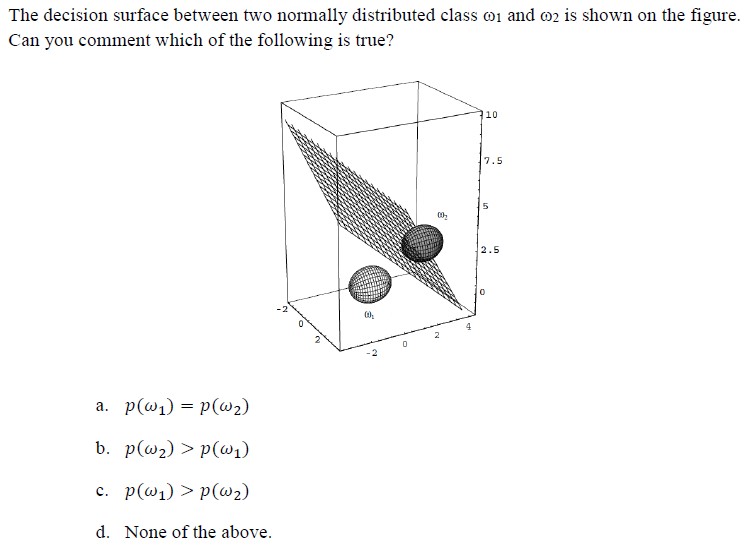

4.

Answer :-

5. For a two class problem, the linear discriminant function is given by g(x) =aty. What is the

updating rule for finding the weight vector a. Here y is augmented feature vector.

a. Adding the sum of all augmented feature vector which are misclassified multiplied by the

learning rate to the current weigh vector.

b. Subtracting the sum of all augmented feature vector which are misclassified multiplied by

the learning rate from the current weigh vector.

c. Adding the sum of the all augmented feature vector belonging to the positive class

multiplied by the learning rate to the current weigh vector.

d. Subtracting the sum of all augmented feature vector belonging to the negative class

multiplied by the learning rate from the current weigh vector.

Answer :-

6. For minimum distance classifier which of the following must be satisfied?

a. All the classes should have identical covariance matrix and diagonal matrix.

b. All the classes should have identical covariance matrix but otherwise arbitrary.

c. All the classes should have equal class probability.

d. None of above.

Answer :- For Answers Click Here

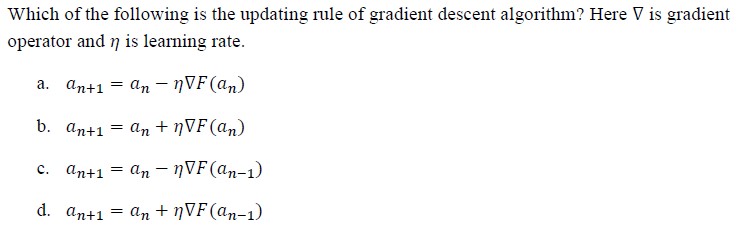

7.

Answer :-

8.

Answer :-

9. In k-nearest neighbour’s algorithm (k-NN), how we classify an unknown object?

a. Assigning the label which is most frequent among the k nearest training samples.

b. Assigning the unknown object to the class of its nearest neighbour among training sample.

C. Assigning the label which is most frequent among the all training samples except the k farthest neighbor.

d. None of this.

Answer :-

10. What is the direction of weight vector w.r.t. decision surface for linear classifier?

a. Parallel

b. Normal

C. At an inclination of 45

d. Arbitrary

Answer :- For Answers Click Here